AMD submitted a new patent describing a GPU chiplet design. Besides the thorough explanation of the chiplet design, AMD also mentions the problems that come with it and how to solve them.

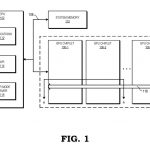

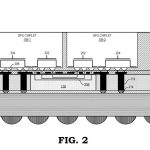

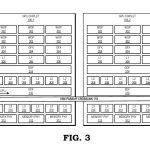

Found by @davideneco25320, AMD's patent starts by stating multiple problems that come by using a GPU chiplet design. Unlike CPUs, GPU workloads includes parallel work, which is inefficient to distribute across multiple cores due to how expensive (computational) and difficult it is to synchronise the memory content with the whole system. Today's GPUs feature multiple cores, but applications and programs see it as a single device.

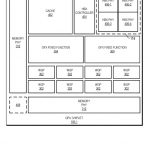

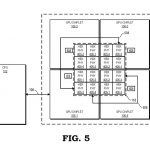

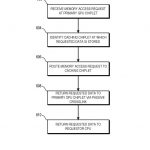

To avoid these issues, AMD intends to use “high bandwidth passive crosslinks” to couple GPU chiplets (groups). The CPU would communicate directly with the first GPU chiplet, while the second GPU chiplet communicates with the first one via a passive crosslink. This passive crosslink would work as a passive interposer dedicated to such tasks.

Standard GPU designs have the graphics processor featuring a dedicated LLC (last-level-cache). With the GPU chiplet design, AMD thinks that each chiplet should be paired with its own LLC, while maintaining communication across all LLCs. This way, the cache would remain “unified and remains coherent across all GPU chiplets”.

Despite AMD's GPU chiplet design patent, there's no confirmation that the company will launch a GPU using a similar architecture in the near future. Rumours about the use of a chiplet design on the RDNA3+ GPU architecture have been floating around, but AMD hasn't confirmed anything yet.

AMD already has experience working with chiplet designs, as we have seen in Ryzen CPUs and APUs, but it's not the only one working on GPU chiplets. Nvidia and Intel are also expected to release graphics processors using their own GPU chiplet designs. Nvidia should debut their GPU chiplet design with the Hopper GPU architecture, while Intel will be releasing its tile-based Xe-HP graphics cards later this year.

KitGuru says: Are GPU chiplets the future of GPU architectures? When do you think we'll see this new tech make its way to the consumer market?

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards