The development of ever smarter artificial intelligences is going to be a major component of high-tier tech firms over the next few years, but Google looks to have the jump on most of them. It's developed its own AI processor, specifically designed for high-operational tasks and it's been testing its effectiveness over the past few years.

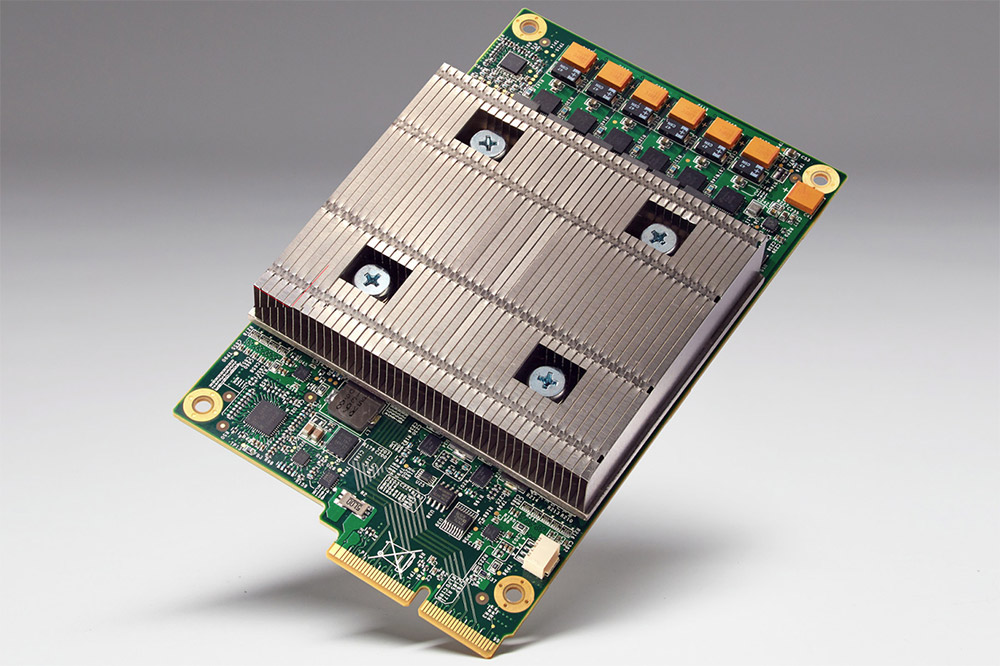

It's called the Tensor processing unit (TPU), a chip that is built specifically with machine learning in mind. That means that its main focus is raw power. It's much faster than your average chip of similar size and power draw and can fit neatly into a hard drive bay in a data centre rack (as per Engadget).

It looks to be passively cooled too. You can imagine a huge array of these would provide plenty of thinking power, with an ominous silence over the whole datacentre.

These chips have already proved effective too, having been used to improve different parts of Google's services, like its mapping systems. TPUs were also used to help the AlphaGo system beat a Go champion earlier this year.

Although Google has no plan to make this chip into a commercial product – no doubt preferring to keep its AI developmental hardware to itself – we should all feel the benefits over the next few years. As automated services become more nuanced, we'll no doubt have TPUs to thank for that in some part.

Discuss on our Facebook page, HERE.

KitGuru Says: Considering how fast we've seen the development of hardware for tasks like Bitcoin mining, expect AI focused hardware to become far, far more powerful in a short period of time.

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards

Correct if I am wrong but that does not appear to be passively cooled. Instead that appears to be low profile directed cooling that is used to cool parts in a rack mounted server. More likely is the fans are hot swapable or have tooless removal, are mounted elsewhere in the case, and provide airflow though the whole case.

“my room mate Lori Is getting paid on the internet $98/hr”…..!kj489ytwo days ago grey MacLaren. P1 I bought after earning 18,512 Dollars..it was my previous month’s payout..just a little over.17k Dollars Last month..3-5 hours job a day…with weekly payouts..it’s realy the simplest. job I have ever Do.. I Joined This 7 months. ago. and now making over hourly. 87 Dollars…Learn. More right Here !kj489y:➽:➽:.➽.➽.➽.➽ http://GlobalSuperJobsReportsEmploymentsClearlyGetPay-Hour$98…. .★★★★★★★★★★★★★★★★★★★★★★★★★★★★★★★★★★★★★★★★★★★★★★★★★★★★::::::!kj489y….,…….

Yeah, that’s not a passive cooler. Definitely a 1u-sized heatsink, designed to be fed by multiple high-speed case fans, fairly standard fare for high-density rack servers.

To run effectively with that small of a heatsink the TDP of the chip would have to be extremely low, likely in the sub-30W range, which seems unlikely given the size of the VRM section. Even with that small of a TDP, there would still have to be significant cooling power and airflow to get the heat out of the servers and out of the datacenter.