Cooling

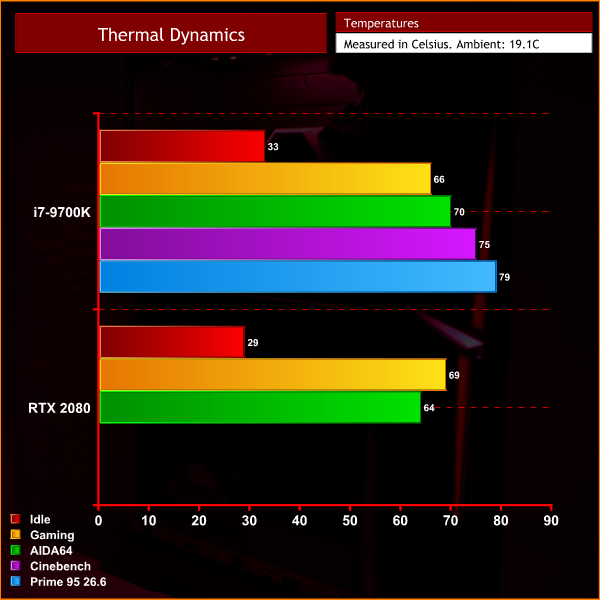

We ran a number of tests to stress the Trident X to the limit, and I have to say it performs very well in the thermal department. Yes, it benefits from the fact that MSI did not overclock the CPU, but it is still an 8-core chip running at 4.6GHz across all cores – and to see it peak no hotter than 79C, even in Prime 95 (version 26.6) is mighty impressive for a system this compact. That's a worst case scenario as well – if you're only gaming, the CPU won't go above 66C.

The GPU, too, ran no hotter than 69C when playing Shadow of the Tomb Raider at 4K, and that was with the core clock sat between 1845-1860MHz which is a pretty good result for an RTX 2080.

Noise

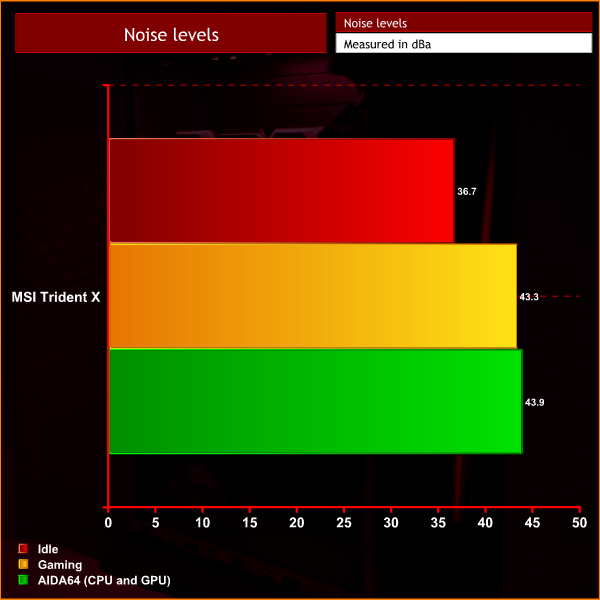

What impresses me most about the Trident X, however, are the noise levels – it is a very quiet system. With our sound meter positioned 1 foot away from the case, peak noise output did not exceed 44dB, meaning it was really very easy on the ears. In comparison, the ASUS GL12CX we have already mentioned peaked at over 60dB – making it significantly louder, despite the fact it is a much bigger machine.

I have to say, this is a very good bit of engineering from MSI. It's a very compact system, yet the temperatures are very good and the system is really very innocuous in terms of its noise levels. I had a bad experience last time around when looking at the Aegis 3 as that was very whiny, but the Trident X is just a gentle whirr that really isn't very bothersome at all.

Power

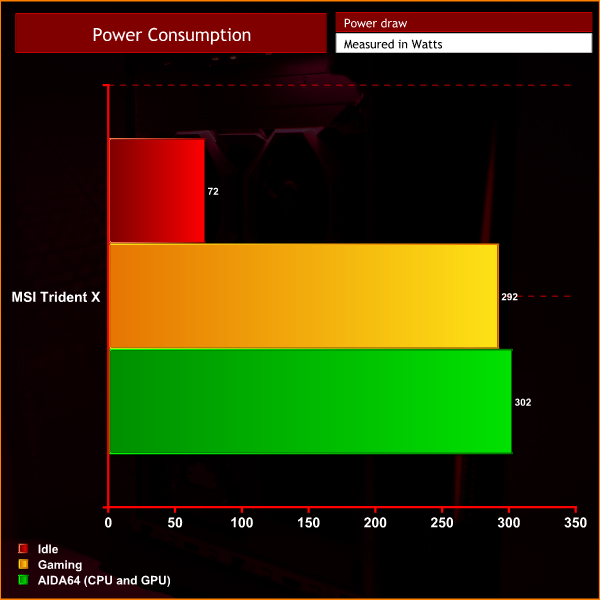

Lastly, we come to power consumption. Here, the Trident X drew about 290-300W under load, whether it was while gaming or being stressed in AIDA64. This means the PSU is operating at just under 50% load ensuring high levels of efficiency. There is also ample headroom if you want to upgrade either the CPU or GPU down the line, though there is obviously no scope for SLI as this is an ITX rig.

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards