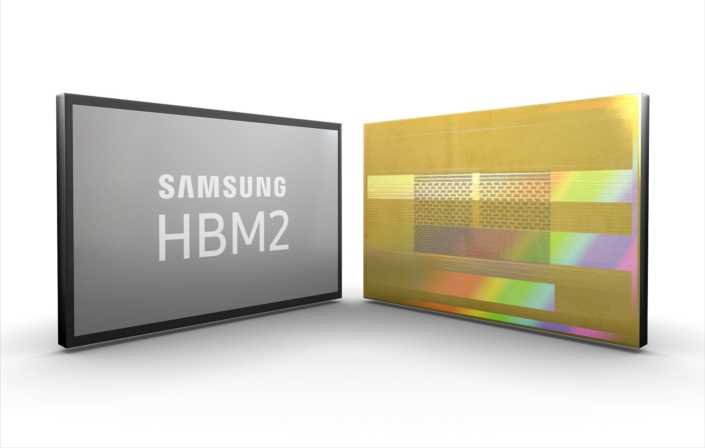

This week, Samsung has begun ramping up production of its second generation High-Bandwidth Memory (HBM2) DRAM chips in preparation for increased demand. Samsung will be producing 8GB stacks with speeds as high as 256GB/s, offering the highest performance to date.

Both AMD and Nvidia currently use HBM2 chips for graphics cards. AMD will be using HBM2 across all of its Vega products, meanwhile Nvidia utilises it on the Tesla V100, its first Volta-based GPU. Samsung hasn't specifically stated which company it is increasing HBM2 production for at this point though.

Speaking about Samsung's HBM2 production plans, the company's executive VP of memory sales, Jaesoo Han, said: “By increasing production of the industry’s only 8GB HBM2 solution now available, we are aiming to ensure that global IT system manufacturers have sufficient supply for timely development of new and upgraded systems. We will continue to deliver more advanced HBM2 line-ups, while closely cooperating with our global IT customers”.

Samsung's 8GB HBM2 consists of eight 8-gigabit HBM2 dies and a buffer die at the bottom of the stack. Everything is stacked vertically and interconnected by TSVs and microbufmps, with each die containing over 5000 TSVs. In total, Samsung's full HBM2 chip contains more than 40,000 TSVs, ensuring consistent, high performance and alternate paths for data should a delay in data transmission occur.

With demand increasing, Samsung is looking to have 8GB HBM2 be around half of the company's HBM output by the first half of next year.

KitGuru Says: It looks like we can expect to see increased amounts of HBM2 floating around over the next year or so. Do you guys think HBM will take over for GDDR5x on high end graphics cards next generation?

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards

Increased demand? That’ll be for nVidia cards then…

Could also be for Intel’s and Xilinx’s FPGAs. See the Stratix 10 as an example.

Vega will be out soon they probably have already orders in place for HBM2 so yeah, demand could go up just for this. It doesn’t matter that the cards are not in the market yet.

Why HBM2 is not made available as memory sticks to be used as RAM in bonafide motherboards?

Likely as the motherboard would have to be designed to run off hbm memory chips rather then ddr4 and quite possibly the memory controller in the cpu

Yeah… I hear you. But it could, maybe, give a big push to the current PC performance. And the cost could benefit all industry. Something kind of similar is already happening with the 3D XPoint non-volatile type of memory.