nVidia have had a rocky year, with Fermi delays costing them sales and market place. With the release of the GTX480 and 470, people still weren't sold on the new architecture as it ran hot, sucked a lot of power and didn't really offer considerable improvements over the current HD5000 series ATI hardware. With the release of the GTX460 however the public finally embraced Fermi and rightly so, because for the price, these cards are the market leaders right now with astounding performance, low power drain, cool running temperatures and massive overclocking potential.

Today we are looking at a pair of the latest Gigabyte cards which not only offer preconfigured overclocks, but have a very impressive looking heatpipe cooler with dual fan configuration. We are also going to test the cards in SLi and put them head to head against ATI's HD5870s running in Crossfire. Why? well we noticed that SLI performance with these cards is top drawer and we wanted to pit them against the high end AMD boards to see just how much performance you get for the money.

The Gigabyte cards are sold with clocks at 715mhz for the core and 900mhz for the memory. The GTX 460 reference clocks are 675mhz core and 900mhz memory.

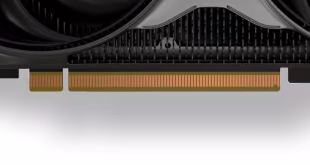

GIGABYTE Ultra Durable VGA Series

- Powered by NVIDIA GeForce GTX 460 GPU

- Integrated with 1024 MB / 256-bit GDDR5 video memory

- GIGABYTE WINDFORCE™ cooling design

- GIGABYTE Ultra Durable VGA High Quality Components

- Supports Microsoft DirectX 11 and OpenGL 4.0

- NVIDIA SLI Ready

- NVIDIA Pure Video HD technology & 3D Vision Surround Ready

- Supports NVIDIA PhysX / CUDA technology

- Features Dual Link DVI-I*2/ mini-HDMI 1.3a connector

- 5.5% better performance than standard HD 5850

- 15% better performance than standard HD 5830

- 4x the DX11 tessellation performance of HD 5830

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards

That is a fantastic board. SLi scaling is impressive, always has been.

Good all round boards, but I keep wondering if it is just too little to late. rumours on the net say ATIs next solutions are out in a months time.

I love the 460, only card from nvidia ive rated in 2 years. 450 not so much.

SLI performance is strong. these cards overclock liike crazy

ermm dont these seem a bit expensive compared to 460s at 145-150 ?

the cheaper models are normally 768mb versions though, not worth picking up

I still think the HD5850 is a better buy. its faster and with AMD you get better drivers and support.

5850 is priced higher + not always faster card, thus not the best buy… yet…

I agree with Jordan – HD5850 is quite a bit more expensive still.

Unless you get a HD5850 on a sale deal, its costing more. and if you manually OC these 460s you get HD5850 performance anyway. thats the whole selling point from nvidia.

Nvidia re panicing tho. they know ATis new cards are coming soon. its a reduced sale to sell as many cards as possible before everyone goes back to ATi.

everyone needs to stop calling them ATI 😉 that name is no more. unfortunately

lol yeah, i dont think anyone cares about the name change

AMD better pull their socks up some and get their new cards to market since the GTX 460’s in SLI are so close to 5870 in Crossfire for half the price