Gaming streaming site, Twitch, has announced the introduction of new automated moderation tools, which will try to analyse the intent of messages and block those it deems hateful or abusive. Designed to do what no team of human moderators could achieve, Twitch claims AutoMod will become more effective over time, thanks to machine learning.

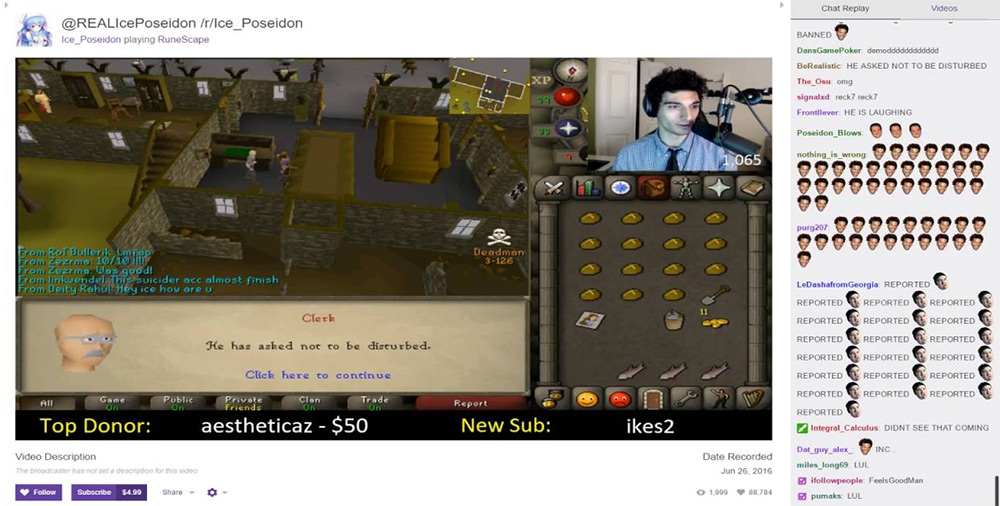

Viewing any popular Twitch stream's chat is a near impossible task – the messages simply fly by too fast to keep up. However if you do manage to break in through the maelstrom and see what's beyond, you may find at least a few people using the platform to be abusive or hateful. Hey, it's the internet right? But that's something that Twitch hopes to put a stop to with AutoMod.

This isn't the kind of tool that will just demand profanity users space out their words to avoid the filters though, AutoMod should leave harmless swearing alone. It's when the tone of the message is aggressive towards another person, be they a chat user or streamer, that AutoMod should step in. Understandably Twitch parent firm, Amazon, hasn't pointed out how it does this, as that would allow people to circumvent the filtering. But we are told it will get better over time, as it learns to better analyse conversations.

Source: KingDenino/Youtube

It will certainly have a bevy of content to work with and learn from.

Currently available in English – though there are beta tools available for Arabic, French, German, Russian and more – AutoMod is likely to ruffle a few feathers during its first few weeks of use, as it will likely either be too heavy handed, or rather lax, as it learns the ropes. But in time it could well become much more capable than any human moderation team could ever hope to be.

“We equip streamers with a robust set of tools and allow them to appoint trusted moderators straight from their communities to protect the integrity of their channels,” Twitch moderation lead Ryan Kennedy said (via VentureBeat). “This allows creators to focus more on producing great content and managing their communities. By combining the power of humans and machine learning, AutoMod takes that a step further. For the first time ever, we’re empowering all of our creators to establish a reliable baseline for acceptable language and around the clock chat moderation.”

Discuss on our Facebook page, HERE.

KitGuru Says: Usually I'm not a fan of filtering or moderation, but I can understand the difficulties Amazon faces here. With some streams having tens or even hundreds of thousands of simultaneous viewers, all of whom can contribute to chat simultaneously. That's simply impossible to moderate with human hands. Something like AutoMod may be the only way to instil some base level of civility in a chat that size.

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards

hate speech banning is a slippery slope..

Google is paying 97$ per hour! Work for few hours and have longer with friends & family! !mj153d:

On tuesday I got a great new Land Rover Range Rover from having earned $8752 this last four weeks.. Its the most-financialy rewarding I’ve had.. It sounds unbelievable but you wont forgive yourself if you don’t check it

!mj153d:

➽➽

➽➽;➽➽ http://GoogleFinancialJobsCash153TopHouseGetPay$97Hour… ★★✫★★✫★★✫★★✫★★✫★★✫★★✫★★✫★★✫★★✫★★✫★★✫★★✫★★✫★★✫★★✫★★✫★★::::::!mj153d:….,…..