KitGuru was recently invited to preview Intel's 2021 Architecture Day, which revealed significant updates to several of the company's product lines. As part of this, we got to hear more on the future of Intel's Arc high-performance discrete GPUs, including the confirmation that these will be manufactured on TSMC's N6 node. Intel's DLSS competitor, Xe Super Sampling (XeSS) was also demonstrated publicly for the first time.

While we heard plenty about Intel's new Alder Lake CPU architecture, and its mix of Performance and Efficiency cores, Intel's step into the high-performance discrete GPU market has proven to be a lesser known quantity. Until now that is, with the company unveiling the Intel Arc branding earlier this week, alongside the promise of availability in Q1 2022.

At Architecture Day 2021, we got a closer look into the inner workings of the Xe-HPG architecture itself, where Intel claims to offer the compute efficiency from Xe-HPC, the scalability of Xe-HP and the efficiency of Xe-LP. This starts with the Xe-core, the ‘compute building block' of Xe-HPG, offering 16 Vector engines (256-bit per engine) and 16 Matrix engines (1024-bit per engine), the latter of which are used to accelerate AI workloads.

Four Xe-cores are grouped together in what Intel refers to as a Render slice, with four Ray Tracing Units also present that handle ray traversal, bounding box and triangle intersections. Intel is keen to emphasise its focus on DirectX 12 Ultimate features thanks to its geometry pipeline, samplers and pixel backends.

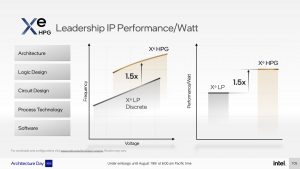

With a seemingly highly scalable design – a block diagram was shown totalling eight render slices, for 32 Xe-cores – Intel is not shy about claiming ‘leadership' performance per watt, with Xe-HPG shown to deliver 1.5x the frequency over Xe-LP at a given voltage point, as well as 50% higher performance per watt.

This comes alongside the news that Alchemist GPUs will be built on TSMC's N6 process node. We've known for a while that Intel will be using an external foundry for Xe-HPG, and this is confirmation of Intel's choice. For those currently lamenting the lack of GPU supply, Intel using TSMC isn't going to help, but the company told us it is maintaining a degree of flexibility here. When asked, what (if any) other nodes were considered, Intel responded:

“We can't share details, but collaboration with TSMC for our graphics products is a great example of our IDM 2.0 strategy. We have the flexibility to decide on the right node for the right products and (in the case of Ponte Vecchio) combine different process nodes into one product.”

Stuart Pann, Intel's Corporate Planning Group SVP, expanded on this by saying:

“These Xe graphics products are part of the first phase of evolution, where we are tapping into another foundry’s advanced nodes for the first time. The reason is simple: Just as our designers use the right architecture for the right workload, we also choose the node that best fits that architecture. At this point in time, these foundry nodes are the right choice for our discrete graphics products.”

This could mean that Alchemist will use TSMC, but for Battlemage, Celestial and Druid – the next three generations of Intel Arc – there seem to be no guarantees. For now, TSMC's SVP of Business Development, Dr. Kevin Zhang, states that N6 ‘provides an optimal balance of performance, density and power efficiency that are ideal for modern GPUs.'

As to why Intel decided now is the time to step into the dGPU market, the company pointed to over 1.5 billion gamers around the world, with game streams hitting a total watch time of 8.8 billion hours. In the words of Raja Koduri, with Xe-HPG Intel wants to ‘remove friction and deliver high performance graphics experiences to everyone'.

Interestingly, Intel also highlighted the fact that the industry has expanded to accommodate over 13 million game developers, many of whom have only ever coded with AMD and Nvidia GPUs in mind. When asked how important it is for Intel to work with game studios to optimise for Xe-HPG, we heard the following;

“Intel has very strong and close working relationships with all game developers, from emerging developers to well-established developers. Our ISV and engineering teams work very closely with them to ensure game engines and corresponding visual effects, features and technologies are optimized across both CPU and GPU hardware. As game developers begin to tap into the Xe architecture that underpins gaming platforms across affordable notebook platforms all the way up to enthusiast-grade desktop PC systems, gamers should expect to see even more benefits to their gaming experiences.”

Indeed, we already know IO Interactive's Hitman 3 was developed with a helping hand from Intel, and with ray tracing announced but yet to arrive in the game, it wouldn't surprise me to see that happen around the launch of Alchemist GPUs next year. Chivalry 2 was also mentioned by name, and we saw a brief snippet of Unreal's UE5 Valley of the Ancient demo running on Intel hardware, but it is clear there is a lot of work to be done in this area.

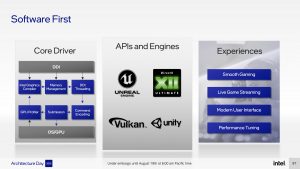

Lisa Pearce, Graphics Software Engineering Director, added to this by emphasising that Intel is taking a ‘software-first' approach. Part of this included the close collaboration with game developers, as stated above, but we also heard about some significant changes Intel has made to its driver design, with both integrated and dGPU products now covered in a ‘unified codebase.' Additionally, Intel has overhauled its driver memory manager and compiler, bringing a claimed 15% throughput improvement (reportedly as high as 80% in one specific scenario) for CPU-bound titles, while game load times also increased by 25%.

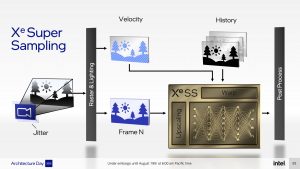

Upscaling and reconstruction techniques are proving ever more important. With Nvidia's DLSS 2.0+ proving successful, alongside AMD's FidelityFX Super Resolution, Intel had to come up with something or risk being left behind before the race even begun. That something was announced as XE Super Sampling, or XeSS, an AI-based technique that, Intel tells us, ‘works by reconstructing subpixel details from neighbouring pixels, as well as motion-compensated previous frames. This reconstruction is performed by a neural network trained to deliver high performance and great quality, with up to a 2x performance boost.'

We were given a first look at the technology in action, with Intel's demo showing a 1080p image upscaled to 4K via XeSS (you can see this in the video above). It didn't look quite as sharp as native 4K, but it was a heck of a lot better than a simple upscale of a native 1080p presentation. It's still early days though, so a full analysis of the technology will have to come later.

What is interesting is that Intel is opening up XeSS to run on its competition. We are told that ‘XeSS technology is based on dedicated AI acceleration hardware (XMX or Xe Matrix eXtensions) built into the first-generation of Xe HPG discrete GPUs', but the technology is also set to be enabled ‘on a broad set of hardware, including our competition. We [Intel] accomplished this by using the DP4a instruction, which is available on a wide range of shipping hardware, including Iris Xe integrated and discrete graphics.'

We're not yet certain how the two implementations will differ, with one of Intel's slides suggesting only a small increase to frame render times when using DP4a versus the XMX, but again we will have to wait and see. The XMX version will be available first though, with the SDK available to ISVs this month, while Intel says the DP4a version is set to become available later this year.

Discuss on our Facebook page, HERE.

KitGuru says: While we don't yet have an idea of how well Intel's Alchemist GPUs will perform, the early signs are promising – let's hope they deliver on that promise in Q1 of next year.

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards