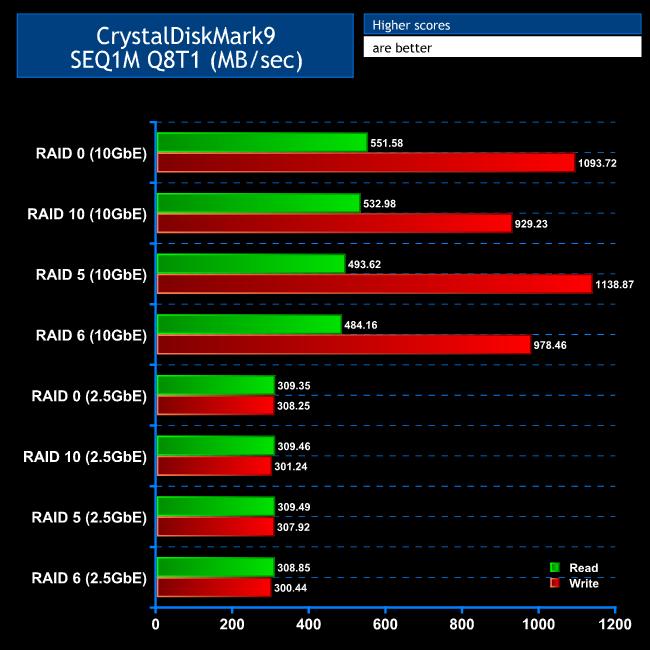

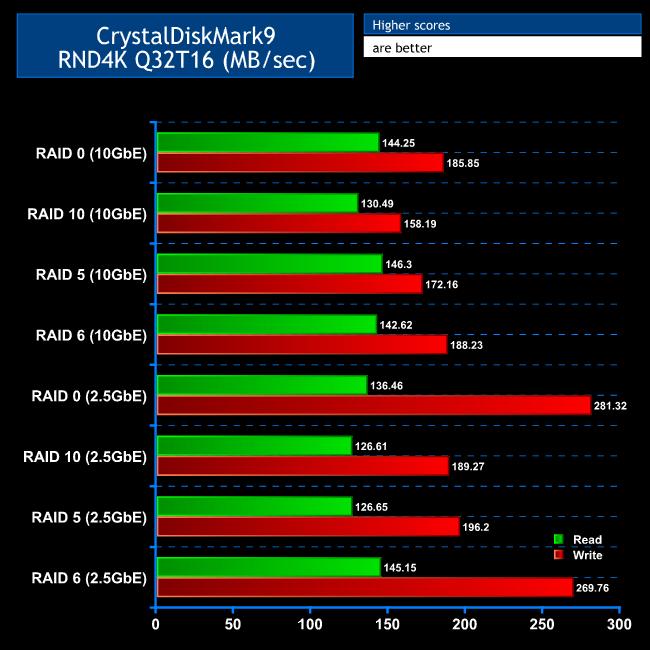

CrystalDiskMark is a useful benchmark to measure the theoretical performance levels of various storage devices. We are using version 9. We ran the SEQ1M Q8T1 test to simulate large file transfers and RND4K Q32T16 to simulate small, random file transfers.

This test highlighted an issue when using extremely high bandwidth networking like 10Gbit LAN. We were getting very slow read results off all the arrays we tried until we configured the MTU size to 9,000 instead of the default 1,500 on the NAS NICs, and similarly on our 10Gbit NIC we increased the Jumbo Packet value to 9,014.

The SEQ1M Q8T1 simulates large contiguous file transfers and you can see that, with the 10Gbit connection, while RAID 0 and RAID 10 are fastest for reading due to their focus on parallel writing for performance, RAID 5 and RAID 6 aren't so far behind. The write speeds are getting close to wire speed for 10Gbit, which points to the synthetic test writing to the drive caches rather than the drives themselves.

It's clear that with a 2.5Gbit Ethernet connection the networking is creating a limit on bandwidth, as all RAID types delivered a little over 300MB/sec.

For large file transfers, you can expect up to 500MB/sec reading with any of the test RAID levels with 10Gbit Ethernet, and a still respectable 300MB/sec with 2.5Gbit Ethernet. We tested this with a 15GB Zip file using RAID 5 and found confirmation of approximately that level of performance both for reading and writing.

The RND4K Q32T16 benchmark simulates small file copies, and there is greater performance variation. However, you're still getting decent read and write speeds. RAID 0 and RAID 5 are just a little bit faster at reading with 10Gbit Ethernet. But the LAN connection speed makes less of a difference because the bottleneck has mostly switched to the mechanical hard drives.

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards