We received an early sample version of Intel's SSD 750 Series drive, hence it was not accompanied by retail packaging or accessories. Retail accessories are likely to consist of a chassis sticker, a bundled half-height PCI bracket, and a driver CD.

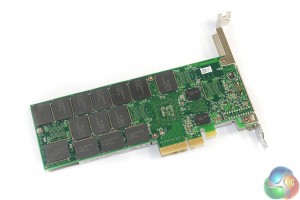

Intel colours the SSD 750 in the company's consumer-orientated grey scheme. Given that this is an outright enthusiast drive, many consumers will be displeased by Intel's decision to use a green PCB (without a backplate).

We imagine that a black shroud and PCB/backplate would have been heavily favoured by enthusiast consumers who value system appearance.

Intel uses a PCIe 3.0 x4 connection (which can work fine in PCIe 3.0 x8 and x16 physical slots) in order to meet the SSD's bandwidth requirements. Each PCIe 3.0 lane is rated at 8GT/s (985MBps), so a quartet is required to feed the drive's ~2,400MBps sequential read potential.

While the card could function in a PCIe 2.0 slot, in theory, bandwidth would be heavily constricted. The reason that I say “in theory” is because widespread support for the SSD 750 is only available on Intel's 9-series (X99 and Z97, for example) motherboards, all of which support PCIe 3.0; vendors are unlikely to spend money creating NVMe BIOS updates for older boards.

Removing the simple shroud (that is nothing more than a thin strip of metal) gives a look at Intel's cooling configuration for the SSD 750. Intel makes no hesitation in pointing out that this drive has favoured performance over low power consumption, so with the maximum energy draw sat at 25W, the cooling system is of critical importance.

A solid sheet of metal (seemingly aluminium) with protruding fins makes firm contact with the front-side NAND packages and other components. A cut-out in that sheet allows the SSD's controller to be cooled by a dedicated heatsink that is firmly clamped in position.

Dissipating up to 25W of thermal energy in the add-in card's area is relatively challenging. Intel pointed out that it is best to install the SSD in a decent airflow case, away from other expansion cards. The “away from other expansion cards” point serves to dissuade users from sandwiching the SSD 750 between a pair of hot graphics cards where it is starved of coolant air.

The sizeable controller (marked CH29AE41AB0) is the same piece of hardware found on Intel's DC P3700 Enterprise SSD.

Support for a PCIe connection and the NVMe specification comes via the controller, and is what sets the SSD 750 apart from many other consumer PCIe SSDs which simply RAID together a number of SATA-based controllers.

More than one SSD 750 is able to operate in RAID, although that removes boot support so may be of more relevance to users wanting a high-speed scratch disk.

Two sizeable capacitors form part of the drive's data loss protection system in the event of a power failure.

A total of 14 NAND packages (marked 29F16B08LCMFS) are found on the drive's rear side. Intel's decision to leave them running without a heatsink implies that the SSD's controller is what is drawing the bulk of the unit's 25W power consumption.

Each of the 14 rear-side NAND packages are of Intel's 20nm MLC variety and have a 16GB capacity. There are a further 18 NAND packages beneath the metal heatsink (that I was unable to safely remove on our sample, due to a tight manufacturing tolerance).

Total capacity of the drive is 1376GB, implying that the 18 front-mounted NAND packages are of a 64GB variety. 32GB of the total capacity is non user-accessible and 8% is allocated for over-provisioning, resulting in a 1.2TB functional capacity (before Windows/system conversions).

Intel rates the drive for 70GB writes per day, with a total of up to 219TBW (Terabytes Written). The company somewhat backs its claim by providing the SSD 750 with a 5 year warranty. A 5 year warranty is little to complain about, although 70x365x5 is ~125TBW, not 219. A little more confidence could have been shown with a 10-year warranty, like Samsung provides with its 850 Pro drives.

A total of five Micron DDR3-1600 SDRAM chips (marked D9PQL) are spread between the PCB's front and rear side. These serve as the drive's cache.

The provision of 400GB and 1.2TB capacities gives users a lower-cost entry point to the SSD 750 Series as well as higher capacity if they require it. I would have liked to see an in-between capacity of 800GB to provide more choice for users that work with large files, but cannot justify the 1.2TB drive.

A set of four LEDs, exclusive to the AIC drive version, show IO activity, a drive failure, drive pre-fail indication, and drive health.

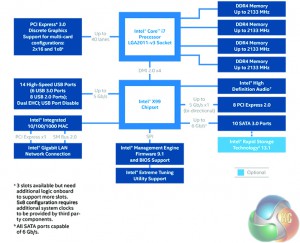

There will not be performance differences between the SSD 750's PCIe card and 2.5″ versions (remember, 2.5″ is just a form factor). Both drives will get their 4-lane PCIe 3.0 connection through either the PCIe slot (AIC) or an SFF-8639 connector (2.5″). Either way, lanes are coming directly from the CPU.

For reasoning as to why an M.2 form-factor variant is not available (many motherboard M.2 slots are routed with four PCIe 3.0 lanes), we have to jump back to Intel's “no compromise” remark. The drive's controller is large, and dissipating 25W in an M.2 form-factor would be incredibly difficult with current motherboard M.2 slot designs. There's also a big limitation to how much NAND can physically fit on the M.2-sized PCB (until 3D NAND opens up truly higher capacities).

And there's no SATA-Express version because the interface's current implementation uses a PCIe 2.0 x2 link that is limited to 10Gbps (about 1GBps theoretical transfer rates). That's like limiting your 200MPH+ Bugatti to use in 70MPH zones – keep it on the track (metaphorical PCIe 3.0 x4 link) where it can really show its potential.

The 2.5″ version is intended for small form-factor systems where users cannot afford to take a motherboard expansion slot away from the graphics card.

Asus has shown one (not finalised) approach to re-routing PCIe lanes to an SFF-8639-compatible connector on the company's Hyper Kit. The small card slots into a motherboard's PCIe 3.0 x4-fed M.2 slot and provides a mini-SAS HD connector. A cable can then link the mini-SAS HD port to the 2.5″ drive's native SFF-8639 connector.

We expect to see more elegant approaches enter the market with future motherboards.

At this point in time, it only makes sense for the SSD 750 to be installed in a PCIe slot that connects directly to the CPU's lanes (not those from the Z97 or X99 chipset). That may change in the future if we see PCIe 3.0 lanes work their way onto the chipset, and if the CPU-to-chipset DMI link rate is increased.

But as it stands, the platform's DMI link (which tops out at around 1.8GBps for all devices connected to it) must be bypassed in order to achieve full performance. Connecting directly to the CPU also has the benefit of lower latency, which is one of the primary goals for the NVMe specification.

With the X99 platform's plentiful supply of PCIe lanes, usage of the SSD 750 is likely to go on without headaches. For Z97 and LGA 1150, however, PCIe 3.0 lanes are in much shorter supply.

On ‘gaming-grade' Z97 motherboards, there will be two PCIe 3.0 slots connected directly to the CPU's bank of 16 lanes. Using both of these slots will result in a maximum connection speed of PCIe 3.0 x8 for both devices. PCIe 3.0 x8 is sufficient bandwidth for the SSD 750, but reduces the graphics card's link rate from x16 (which we have tested to show a minute performance loss). No big deal, except you lose SLI capacity (which requires x8 links for each GPU).

The splitting on many higher-end Z97 motherboards is done between three PCIe 3.0 CPU-linked connectors. With all three slots populated (two graphics cards and the SSD 750), the lane configurations typically split as PCIe 3.0 x8/x4/x4. So your first card receives a PCIe 3.0 x8 link, while the second and third get an x4 link each. PCIe 3.0 x4 is the design link rate for Intel's SSD 750, but the remaining x8/x4 links can only run CrossFire, not SLI.

Please note that the above points are just examples for Z97 usage (albeit probably the most relevant ones). And when the SSD 750 card's PCIe 3.0 x4 link is referred to, the same points are true for the 2.5″ SSD version because it uses the exact same PCIe 3.0 x4 lanes, just in a different form factor.

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards

Looks great! As per with all the new stuff… Not keen on the price! Ha But absolutely do want!

Looks great! As per with all the new stuff… Not keen on the price! Ha But absolutely do want!

♋♪♪♪♪♋86$ PER HOUℛ@ai5:

Going Here you

Can Find Out,,

►►►► ::>>http://WorkOnlineMag.com/get/position…

✸✸✸✸✸✸✸✸✸✸✸✸✸✸✸✸✸✸✸✸✸✸✸✸✸✸✸✸✸✸✸

Hi Norbs,

There are some enthusiasts who stand by backplates on any expansion card they use. When dropping more than £300 or £800 on an SSD, a black backplate worth tens of pennies is not too much to ask for.

A metal backplate would also conduct heat away from the rear-mounted NAND packages, so its function is more than to prevent ‘severe clashing with one’s system’.

Luke

I just would refrain from listing it as a CON of the card; it’s performance speaks for itself. Most datacenter grade pcie cards don’t have any type of backplates. Only reason I’d agree with you would be to simply protect the NAND because

I’m pretty sure intel did their due diligence to make sure that card

does not need cooling on those NAND chips.

I think it’s fair to call it a con; many enthusiasts will want a backplate but the drive doesn’t have one. To many people, buying a piece of computer hardware is about more than just the performance (whether or not other people agree with that mindset). As we can see though, it is a clearly a minor ‘con’, hence why it’s tied in with the point for an intermediate capacity. I would be surprised if anybody chose not to buy it solely for lacking a backplate, but they may attempt a mod to make it look better in their system.

I agree that the NAND packages on the rear are unlikely to *need* cooling from a backplate – they are relatively low capacity (lower number of dies). Most people value a backplate for aesthetics although there are arguments for structural rigidity and cooling, whether or not they are actually required by the drive.

I just pointed out the lack of backplate as a con. If you personally don’t see it as a con then that’s completely fine and it can be ignored.

Luke

Why get this when you can get the Samsung SM951. Sure the SM951 is AHCI not NVMe, but 2.0 GB/s is insane regardless. On top of that, I believe it’s less expensive, and it’s nice and small due to it being M.2, therefore leaving room for your 2 or 3 GPUs, soundcard, or whatever. Plus, I’m sure Samsung will make a more consumer-ish / less OEM-ish version of the SM951 soon which I’m guessing will be NVMe, but now I’m just speculating. Anyways, Samsung SM951 all the way.

The intel kills the SM951 on iops… depends on what you are using it for and too was trying to decide which to buy. On one hand the intel 750 will perform better while on the other hand I can use the SM951 in a laptop or other device later. I also tried to see if I can even get my hands on the 512GB SM951 and the best I could do was a site that had it backordered until october 2015.

Then again the SM951 doesn’t come with a back-plate “for enthusiasts” lmao.

I agree with you, no backplate is almost a deal breaker for me

Now, I just need to win Euromillions and I will get one haha. Just kidding, I don’t have any luck…

dfyt . true that Patricia `s report is impossible… on wednesday I bought Saab 99 Turbo since I been making $8569 thiss month and also ten/k this past month

. it’s actualy my favourite-work I’ve had . I began this three months/ago and pretty much straight away was earning more than $75… p/h . you could try here HERE’S MORE DETAIL

Too funny, I just saw this drive on newegg and it seems as if the final retail version actually includes a backplate.